What LP, ops, and ecom teams miss when they only see dashboards and alerts

Welcome to the penthouse, where LP, ops, and ecom teams are querying data, reviewing dashboards, and acting on partial returns data or alerts someone else surfaced. What they seldom see is the foundation and structural supports that are holding them up below.

Vishal Patel, Appriss’s Chief Product and AI Officer, has spent a decade building the layers underneath that experience. His clearest insight is that value compounds only when all 3 layers of the AI work together.

Data quality drives model accuracy (data intelligence layer) → model accuracy makes the system’s recommendations trustworthy (decision intelligence layer) → every decision, transaction, activity can be drilled into (workflow intelligence layer). The latest addition to this last layer, Appriss’ Sidekick, brings that workflow intelligence into natural language, but the AI has been there all along.

Patel works with customers from their first conversation through go-live and beyond. He’s heard every version of “we didn’t realize the system could do that” because teams missed out on value when they never understood what was running underneath.

Now, he’s breaking down what each layer does and how to use it.

Key Takeaways:

- Most teams inherit static return policies that fraudsters and abusers have already mapped and learned to exploit

- Data quality drives model accuracy, which determines whether your team trusts the recommendations whether it is a real-time decision on a return or an alert about internal loss.

- Complete data flowing through all three AI layers produces an 8 to 12 percent reduction in return dollars

When the foundation is built on static rules

Here’s what most teams are working with before they move to the 3-layer structure: a return policy written by someone who no longer works there, adjusted during a fraud spike, never meaningfully revisited.

It has thresholds like 4 returns per year and 30-day windows that made sense when they were set. But now? They’re outdated.

“Fraudsters are going to figure out those boundaries, and start to stay within those boundaries, so will abusers who don’t even realize they’re abusing, like wardrobers and bracketers,” says Patel. “Perhaps by changing their credit card, changing their identity, and only doing 3 returns at a time, because they figured out that the fourth one gets declined.”

The same threshold flagging a bad actor eventually flags your best customers making 25 purchases a year. A static rule has no way to tell the difference.

That’s the constraint of building on rules instead of on a 3-layer, AI-led structure. Fraudsters adapt faster than anyone can update a policy manually.

The 3-layer AI system your team can leverage

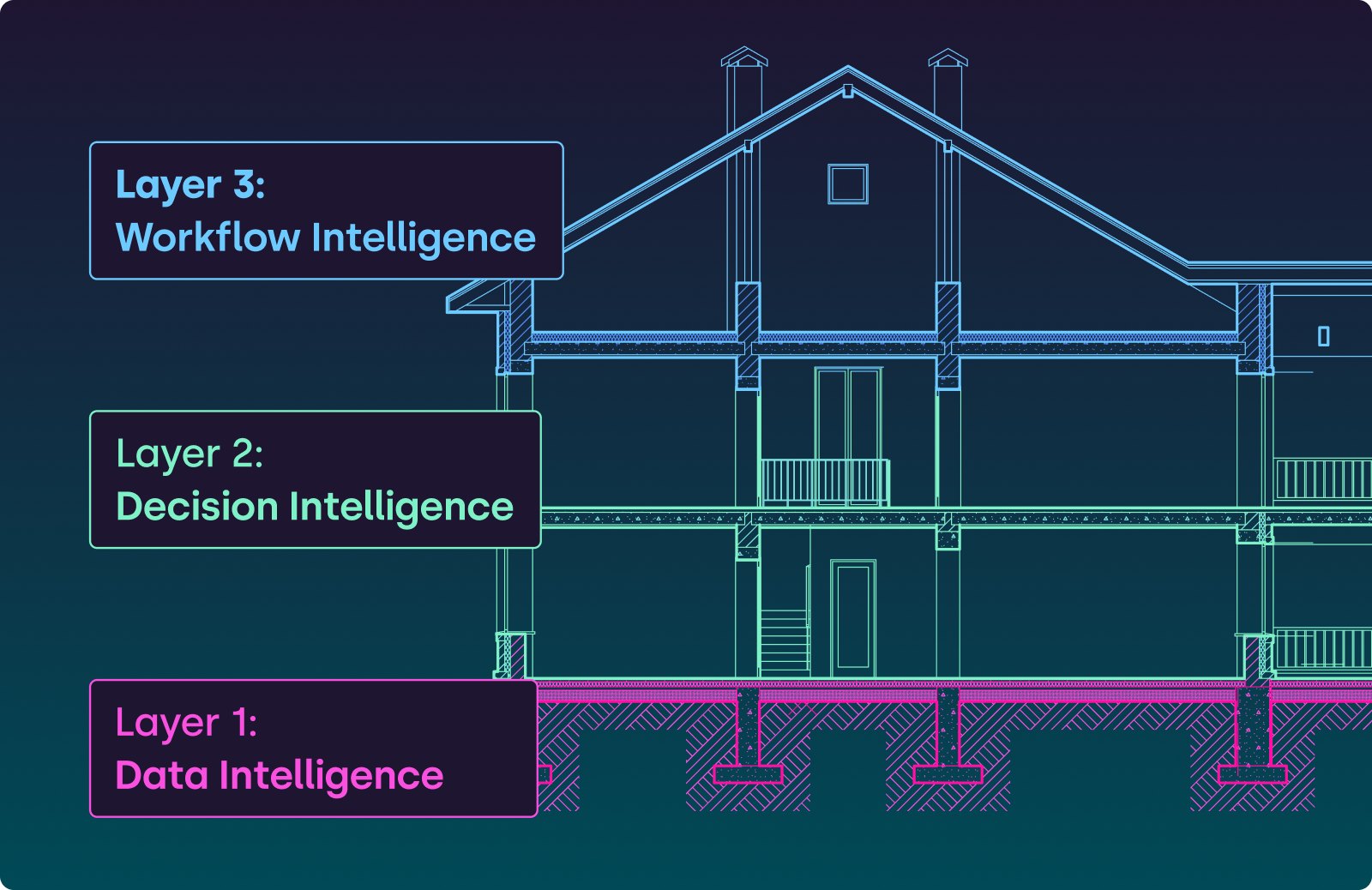

When these practitioners think about AI in their loss system, they’re thinking about what’s visible on the top floor: a risk score, an alert, a decline. What Patel explains is the load-bearing structure helping produce that output.

- Layer 1 is the foundation: algorithmic AI, data intelligence, building the customer picture from every channel.

- Layer 2 is the structural support: machine learning, decision intelligence. Same pattern in, same decision out.

- Layer 3 is the top floor: generative AI, workflow intelligence.

Working only on the top floor without understanding what’s supporting it is like redecorating the penthouse while the foundation cracks.

Layer 1: What your foundation is doing

Layer 1 is the foundation handling consumer identity resolution across cards, emails, phone numbers, and channels. And it’s often doing this invisibly. Appriss pulls cross-retailer consortium signals from 250M unique IDs and normalizes transaction data across every system you operate, from in-store, to online, to customer service center.

A customer who buys online and returns in-store at 3 different locations isn’t showing up as 3 separate low-risk people. Layer 1 has already connected those identities. The decision your team sees at the POS is scoring the full behavioral history.

Where the foundation cracks: if your POS data feed is incomplete or customer service center returns aren’t flowing into the platform, Layer 1 works with a partial picture. With a single channel view, your layers stand incomplete and lead to poor decisions

Layer 2: What the structural support is catching

The machine learning models in Layer 2 are the structural beams that carry the load of every decision. They score the unified customer data the foundation built.

They can approve, warn, or decline in under one second at the POS, Order Management System (OMS), or customer service center, with decisions being consistent across every transaction, every associate, every store.

What it catches varies by vertical:

- Apparel → repeat event-purchase-and-return behavior

- Sporting goods → catches seasonal gear trials and promo abuse

- Beauty → catches gift-with-purchase exploitation (13% lower promo expenses)

- Grocery → surfaces cashiers ringing produce as “misc items”

Remember: Layer 2 models use core machine-learning models that are predictable and auditable. Every decision has traceable and explainable factors for compliance review. Generative AI lives in Layer 3, not here.

Layer 3: What the top floor provides between decisions and investigations

Sidekick is part of what your team interacts with on the top floor. It works on top of the decisions the structural support produces, and changes how fast your team can act on it.

An analyst can ask Sidekick, “Which items have the highest return rates this month? Show me any that spiked compared to last quarter.” That kind of cross-period returns analysis used to require pulling reports and comparing spreadsheets. It now takes seconds.

Users based on role can also ask “Why was this return declined?” or “How are these multiple Loyalty IDs connected together?” and get a plain-language explanation of the risk signals and data that drove the decision.

The human-in-the-loop design is intentional. While Sidekick surfaces and prepares, your team makes the choice to decide and act.

How the top floor bolsters the foundation

The human actions your team takes on the top floor become signals that travel back down through the entire structure. A shopper warned by the system, who then completes 3 clean transactions, is being scored differently by the time the 4th transaction processes.

Every decision or recommendation made is incorporated into the next recommendation. If somebody is warned, 90%+ of shoppers go on to be great, profitable customers. The next purchase updates that risk model.

This is the mechanism behind the risk threshold dial. Appriss monitors model performance on your behalf and adjusts sensitivity based on what your business needs. Your team doesn’t tune the models. You set how aggressive or conservative you want decisions to be, and the system calibrates accordingly.

Where this matters most: false positives. The 99.99% decision accuracy can give retailers peace of mind that their best customers are protected while fraud and abuse is surgically removed.

Complete load-bearing integrity

When all 3 AI layers work together—foundation, structural support, and top floor—the outcomes show up across return rates, team productivity, and counter-level consistency.

Return rate reduction: Appriss customers typically move from a 15% return rate to 13% after deployment. The 8–12% reduction in return dollars comes from the model running consistently on complete data.

LP team throughput: Investigation times drop through automated decisions in under one second. Engage approves, warns, or declines at the counter. When your team does need to investigate, Sidekick handles the pre-processing (filtering, prioritizing, and surfacing what matters), still reducing the investigation time.

Consistent decisions at the counter: The return counter stops being a negotiation. The associate is no longer making a gut call when return decision accuracy is 99.99%.

Most teams only work on the top floor because that’s what’s visible. They’re querying dashboards, adjusting thresholds, chasing alerts without ever checking whether the foundation underneath can support the weight.

Understanding the architecture changes what you can control. When you know what each layer does, you stop adjusting outputs haphazardly and start managing the foundation, the models, and the workflow as a complete, unified system.

Frequently asked questions:

How does the system prevent data from one retailer being exposed to another retailer’s training models?

Models learn from behavioral patterns, not identities. When Appriss Retail trains a wardrobing model, it’s learning the signature of what abuse behavior looks like: buying event-appropriate clothing, keeping it for 4–7 days, returning within policy, repeating across seasons. The model doesn’t care who the person is. Individual retailer data is aggregated and anonymized before any model touches it.

This is also why the consortium model is valuable: Appriss sees 40% of all U.S. retail transactions across 250M unique customer IDs. Cross-retailer fraud patterns—like receipt reuse attempts connected across store locations and retail partners—only become visible at that scale. A single retailer, even a large one, can’t see those patterns alone.

What’s the difference between the machine learning models making decisions and the generative AI in Sidekick?

Layer 2 machine learning models are deterministic: given the same behavioral pattern, they produce the same output every time. They score against labeled fraud examples (wardrobing, tender laundering, tender swapping) with known inputs and reproducible results. Every return authorization decision is fully traceable—the factors that drove it are available for compliance, customer service, or legal review.

Layer 3 generative AI (Sidekick) operates at the workflow layer. It doesn’t make approve/warn/decline calls. It surfaces insights, writes searches, assigns investigations, and pre-processes exception queues. The human remains in the loop: Sidekick prepares, your team decides and acts.

When IT and audit ask whether generative AI is making fraud decisions, the answer is no. GenAI accelerates workflow. ML models make decisions. They’re different tools at different layers.

How do I know the model is working correctly if I can’t see the rules it’s following?

The model is scoring behavioral patterns against 20+ years of tagged transaction data. But you can measure whether it’s working through three outputs your team already tracks: decision accuracy (approve/warn/decline correctness), false positive rate (legitimate customers incorrectly flagged), and return rate reduction (the financial outcome).

Appriss monitors model performance on your behalf. QBRs are structured around: what’s your current return rate, what was it at deployment, how many overrides are happening, and should we adjust the risk threshold dial. Your team doesn’t manage the model. You manage the outcomes it’s producing.

If something feels wrong—like a sudden spike in warnings for a customer segment that shouldn’t be high-risk—that’s a signal to check data feed completeness at Layer 1, not model accuracy at Layer 2. Most “model problems” trace to incomplete data upstream.

How does the 3-layer AI architecture apply beyond returns to shrink and operational risk?

The same foundation works across Total Retail Loss. Layer 1 unifies data from stores, DCs, and customer service. Layer 2 models score patterns whether it’s return fraud, inventory shrink, or operational incidents. Layer 3 surfaces what matters across all loss categories.

For shrink: the system connects POS exceptions, inventory discrepancies, and associate behavior patterns that would look unrelated in siloed systems. A cashier voiding transactions at register 3 while inventory adjustments spike in the same department gets flagged because Layer 1 sees the connection: catching internal fraud that would otherwise stay hidden.

For operational risk: incident reports, safety events, and compliance violations flow into the same decisioning layer. Store managers can ask Sidekick “show me all slip-and-fall incidents in the Northeast region this quarter, assign the top 3 to me” without waiting for IT to build a custom report.

When all three layers work on complete data across returns, shrink, and operations, retailers get visibility into total retail loss instead of managing three separate problems with three separate tools.